In this guide, I'll walk through what AI risk management is in 2026, the categories of risk worth tracking, the frameworks that matter, and how to run an assessment that holds up under scrutiny.

TL;DR

- AI risk management is its own discipline now, not a side extension of traditional ERM, as AI introduces failure modes (drift, hallucinations, prompt injection, foundation model dependency) that older risk programs weren't built to catch.

- The risk surface is wider than most teams realize, spanning bias, privacy, security, performance, third-party model dependency, agentic tool misuse, and shadow AI usage that nobody in compliance knows about.

- The right approach in 2026 is risk-tiered. Not every AI system needs the same level of oversight, and pretending otherwise burns out the team and produces governance theater.

- Frameworks like the NIST AI RMF, ISO/IEC 42001, and the EU AI Act each cover a different slice of the program, and mature teams stitch two or three together rather than running on one.

- Tools matter because tracking dozens of AI systems across owners, lifecycle stages, and review cadences breaks spreadsheets fast, and connected GRC platforms like SmartSuite can centralize models, assessments, controls, and remediation in one workspace.

What is AI risk management?

AI risk management is the ongoing work of identifying, assessing, treating, and monitoring the risks that come from how your organization builds, buys, deploys, and uses AI systems.

It's the operational layer of a broader AI governance program, drawing on disciplines that used to live in separate parts of the business: enterprise risk management, model risk management, responsible AI practice, and machine learning operations.

It gets treated as its own thing because AI breaks some assumptions that traditional risk frameworks rely on.

A regulatory financial model gets validated on a defined cycle and behaves consistently between reviews. A production language model can drift in a week.

AI vendor reviews ask questions traditional ones don't, like whether the foundation model your vendor depends on changed behavior last Tuesday.

An effective AI risk program does several things continuously:

- Tracks every model, AI agent, and AI-touched workflow within the company.

- Assigns clear ownership to each.

- Runs assessments against a defined methodology.

- Links findings back to the controls and remediation that close them.

It's not a project with an end date. It's an operating discipline next to the rest of your GRC stack.

What are the different types of AI risk management?

The phrase "AI risk" gets used loosely, so it helps to break the field into the categories GRC teams, AI governance specialists, and risk leaders deal with day to day:

- Bias and fairness risk covers discriminatory outcomes that come from training data, algorithmic design, or deployment context. Current AI regulation addresses this category most directly.

- Privacy risk covers training data exposure, membership inference attacks, and accidental leakage of personal or confidential information into model outputs.

- Security risk is its own evolving beast in 2026: prompt injection, jailbreaks, model theft, adversarial inputs, and the new attack surface that comes with agentic systems calling tools and APIs without a human in the loop.

- Performance and accuracy risk captures hallucinations, model drift, and the gap between how a model behaves in testing versus six months into production with real-world inputs.

- Compliance risk is about whether your AI systems can hold up against the EU AI Act, the Colorado AI Act, sectoral rules from finance and healthcare, and the documentation requirements each one creates.

- Operational risk covers what happens when an AI system fails or behaves unexpectedly: cascading errors in agentic workflows, business processes that quietly came to depend on a model, and the recovery work that follows when it doesn't.

- Third-party AI risk is the vendor problem with extra steps. Your SaaS vendor's foundation model provider can change behavior, deprecate a model version, or hit rate limits, and the downstream effects show up in your workflows.

- Reputational and ethical risk is the public-facing version of all of the above. A model that produces bad output once can become a brand crisis fast.

- Shadow AI risk cuts across all of these. It's the employee pasting customer data into a public chatbot without anyone in IT or compliance ever finding out.

Why is AI risk management important?

Three things are happening at once in 2026: regulators are enforcing real obligations, customers ask how you govern AI in procurement reviews, and boards want to know what the company's AI exposure actually looks like.

Being unprepared for any of those is expensive.

Beyond the external pressure, AI risk management does several things internally:

- It protects the organization from harm: discriminatory outputs, privacy violations, security incidents, and model failures that ripple into business processes.

- It creates a defensible position when an auditor, a regulator, or a customer's procurement team asks how you govern AI.

- And it gives leadership a structured way to weigh AI investments against their downsides, which matters more every quarter as AI moves into core workflows.

The EU AI Act's high-risk system obligations are scheduled to come into force in August 2026 (although it has been proposed that it should be pushed to December 2027), and U.S. state-level AI laws are stacking up faster than most teams are tracking.

What are the benefits of managing AI risk?

The payoff from running this well compounds over time:

- Fewer incidents that go public, because you catch issues in monitoring instead of in a viral social media post.

- Faster procurement cycles when enterprise customers and regulated buyers ask hard questions about how you govern AI.

- Cleaner AI investment decisions, because leadership weighs new AI projects against a real view of risk appetite instead of FOMO.

- Lower compliance cost over time, because the work to demonstrate EU AI Act conformity, NIST AI RMF alignment, or ISO/IEC 42001 certification overlaps heavily once the foundation is built.

- Better model performance, because the same monitoring infrastructure that catches risk also catches drift, regression, and quality issues.

- More room to innovate. Teams that know where the guardrails are move faster inside them, instead of slowing every project down with ad hoc risk debates.

How can you approach AI risk management?

There's no shortage of opinions on this. The approach I keep coming back to in 2026 sorts AI systems into tiers and scales control intensity to match.

The trap most teams fall into is treating every AI system the same way: same intake form, same risk assessment template, same review cadence.

That collapses fast. The AI systems in a typical mid-market company range from "a chatbot that suggests help articles" to "an automated underwriting model that decides who gets a loan." Those need very different oversight.

A practical tiering looks something like this:

- Tier 1: Critical AI systems. Models that make or materially influence decisions about people (hiring, lending, medical) or that drive significant operational or financial outcomes.

These need formal model risk management, human-in-the-loop checkpoints, scheduled bias and performance reassessments, and full documentation aligned to whichever framework your regulators care about.

- Tier 2: Operational AI systems. Models that automate work but don't make consequential decisions about individuals.

Customer service routing, content classification, internal productivity tools. These need an inventory entry, an owner, a baseline risk assessment, and continuous monitoring against drift.

- Tier 3: Lightweight AI usage. Embedded AI features inside SaaS tools your teams already use.

These need a vendor risk assessment, basic guardrails on what data goes in, and a review cycle tied to vendor contract renewals.

- Tier 4: Shadow and ad hoc AI. Employees using public AI tools with company data.

These need policy, training, monitoring, and (ideally) a sanctioned alternative so people don't go around the rules to get work done.

The tier determines the controls. You don't run a 40-page assessment on a help-article chatbot, and you don't wave through an underwriting model with a checkbox.

The EU AI Act takes the same risk-based logic and codifies it into legally defined categories (prohibited, high-risk, limited-risk, and minimal-risk) based on use case rather than operational impact.

How to conduct an AI risk management assessment

An AI risk assessment is the structured work of figuring out what AI you have, how risky each piece is, what controls you need, and whether those controls are still working:

Stage 1: Inventory

You can't govern what you can't see, and most companies have more AI in production than anyone thinks.

A real inventory captures every AI system in use, including in-house models, vendor AI features, embedded LLM capabilities, and pilot projects.

It tracks the owner, the use case and decision impact in plain language, and the lifecycle stage: in development, in pilot, in production, retired.

Shadow AI usually starts becoming visible at this stage too.

Stage 2: Classification and risk assessment

For every system in the inventory, you assess across the risk categories that matter for that system's context.

A customer-facing recommendation model gets a deeper bias and fairness assessment than an internal forecasting model.

A model trained on personal data needs privacy, and re-identification testing the productivity tool doesn't.

The assessment produces a tier, a set of identified risks, and a residual risk rating after planned controls are applied.

This is also the stage where you map each system to whichever frameworks your program is aligned with: NIST AI RMF functions, ISO/IEC 42001 clauses, EU AI Act risk categories.

Stage 3: Control selection and treatment

Once a system is tiered and assessed, the controls follow.

For high-risk systems, controls usually include human-in-the-loop review, scheduled bias testing, performance threshold monitoring, model card documentation, and incident escalation paths.

For lower-risk systems, controls might be a usage policy, a vendor risk assessment, and a quarterly check-in. Each control gets an owner, an evidence requirement, and a review cadence.

Stage 4: Operate and monitor

This is the stage that separates a real program from a slide deck.

AI behavior changes with new data, new model versions, and new prompts. Controls decay. New systems show up that weren't on the inventory last quarter.

A working program runs continuous monitoring on top of scheduled reassessments, with dashboards that show leadership where AI risk concentration is heaviest, what's overdue for review, and what issues are open.

The four stages overlap. When Stage 4 surfaces a finding, the system goes back to Stage 2 for re-classification.

When marketing acquires a new tool nobody told the AI team about, Stage 1 starts again. The work is continuous by design.

What are the different AI risk management frameworks?

The same thing that's true for traditional risk management is true here: most mature programs blend two or three frameworks rather than running on one.

The picks depend on your industry, your regulators, and where your existing GRC program is anchored.

- NIST AI Risk Management Framework (AI RMF 1.0) was released by the U.S. National Institute of Standards and Technology, with a Generative AI Profile added afterward.

It's organized around four functions: Govern, Map, Measure, and Manage. Voluntary, but the de facto reference for U.S. organizations and the closest thing the field has to a common vocabulary.

- ISO/IEC 42001:2023 is the first AI management system standard you can get certified against, structured as the AI counterpart to ISO/IEC 27001 (leadership, planning, support, operation, performance evaluation, improvement).

If your buyers ask for AI governance attestation, this is the certification they're starting to ask about.

- The EU AI Act is regulation, not framework, but any AI risk program with EU exposure has to map to it.

The high-risk obligations are scheduled to apply from August 2026 under the current text, with provisions for general-purpose AI already in effect.

- The OECD AI Principles and the G7 Hiroshima AI Process sit at the policy layer above any of this.

They don't give you operational controls, but they shape how regulators and standards bodies write the ones that do.

- Sectoral frameworks matter too. CRI's Financial Services AI Risk Management Framework (FS AI RMF) is built specifically for financial services.

NIST's SP 800-218A covers secure development for generative AI and dual-use foundation models.

State-level laws (Colorado AI Act, Illinois AI Video Interview Act, amendments to the Illinois Human Rights Act) also add their own documentation expectations.

The aim isn't framework purity but mapping cleanly to whichever frameworks your auditors, regulators, and buyers care about, with one set of evidence supporting all of them.

What kind of tools can you use for AI risk management?

Tools fall into a few buckets, and which one fits depends on the maturity and scope of your AI program.

- Spreadsheets and shared docs. Most programs start here, with a model inventory in Sheets and a risk register in Excel.

Works for a small program with a handful of systems and a single person keeping it current, but struggles when the inventory grows past what one person can hold in their head.

- Specialized AI governance platforms. Tools like Credo AI, Holistic AI, and IBM watsonx.governance focus specifically on AI risk: model inventories, fairness testing, EU AI Act readiness, policy enforcement.

Strong on the AI-specific work, though they often live separately from the rest of the GRC program.

- Model monitoring and observability tools. Arize, Fiddler, WhyLabs, and Evidently AI watch model behavior in production and flag drift, bias shifts, or performance degradation.

They solve the technical monitoring problem, but aren't governance platforms.

- Compliance automation platforms. Vanta, Drata, Sprinto, and Secureframe have added AI governance modules to their certification automation cores.

Useful if your priority is ISO/IEC 42001 or SOC 2 evidence collection. AI risk can extend beyond what fits inside a certification scope, though.

- Enterprise-grade GRC platforms. Archer, MetricStream, OneTrust, IBM OpenPages, and Onspring all have AI governance capabilities now.

They give depth and enterprise scale at the cost of long implementations and higher price tags.

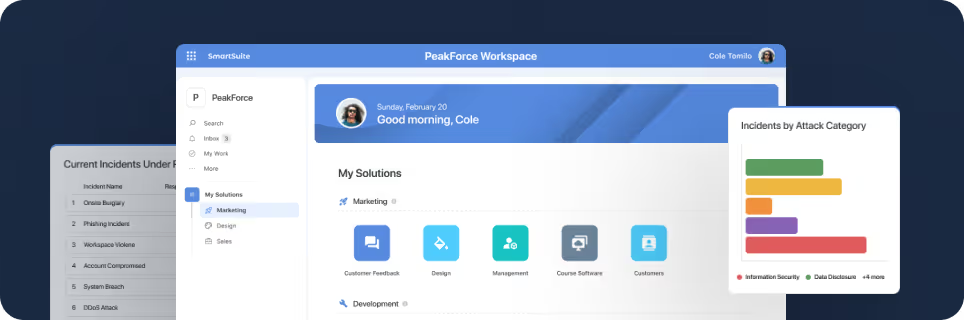

- Connected GRC platforms. A newer category where AI governance lives alongside the rest of the GRC program in a single, flexible workspace, with SmartSuite (that’s us) as the leading example.

SmartSuite is an AI-native work platform that puts AI governance, GRC, and the operational work that surrounds them in a single workspace.

Every AI system the organization runs gets registered in a central inventory with its owner, lifecycle stage, decision impact, and tier classification.

From there, the same record carries the risk assessments, performance reviews, supporting evidence, and remediation work attached to that system, instead of those things living in disconnected tools.

Because the platform is no-code, the AI governance team can shape assessment templates, review workflows, and dashboards around how the business operates.

When a new regulation lands or your buyers start asking for new evidence, the team adjusts the model directly instead of opening a vendor support ticket.

SmartSuite AI handles the slow parts of governance work: pulling structured findings out of vendor questionnaires, drafting first-pass model documentation, and surfacing missing context in incident reports.

AI prepares the suggestions, and humans review and approve the governance decisions, so the audit trail stays clean.

Live dashboards give leadership a current read on AI posture, exception trends, overdue reviews, and compliance readiness, without anyone exporting data into a separate BI tool.

Pricing starts at $15/user/month for the Team plan (minimum 3 users), with solution-based pricing available for regulated enterprises that need scoped access by department or regulatory domain.

It fits well for mid-market companies, regulated industries, and GRC teams that want AI governance to be part of the rest of the risk and compliance program, instead of a separate speciality system someone has to remember to update.

Pick a platform that fits the rest of how your business runs

Two questions decide which platform makes sense:

- How wide does the program need to go?

AI risk is one program inside a bigger GRC stack.

Models connect to vendors, vendors to contracts, contracts to compliance obligations, obligations to controls.

If the platform doesn't connect to the rest of that work, the AI risk team ends up exporting CSVs every time a board report is due.

- How much can the team adapt without writing code?

AI governance is a moving target. Every six months, regulators add a new requirement, and your buyers ask new questions.

The team needs to update assessment templates, review workflows, and reporting on its own. Platforms with rigid templates fight that work.

SmartSuite was built around both questions. Our platform connects AI governance to the rest of GRC, audits, third-party risk, and operational work in one workspace.

The rollout fits in weeks instead of quarters because the platform is configured by the team using it.

And the no-code data model means the AI risk team adapts the program as requirements change, instead of waiting on a vendor release cycle.

➡️ Start a free SmartSuite trial or book a demo to see how your team can manage governance, risk, and compliance in one place.

⚠️ Disclaimer: This article is for general information and isn't legal advice. Regulatory dates, scope, and enforcement details change. Confirm with qualified counsel before making compliance decisions.

SmartSuite provides work platform for standardizing workflows in the following areas:

- Governance, Risk & Compliance

- IT & Service Ops

- Project / Portfolio Management

- Business Operations